Geographically Distributed Development (GDD) is a common strategy in the software world today. Organizations are gaining experience in developing software globally and are discovering that the competitive demand for best-in-class, high quality applications requires greater agility in quality management. Unfortunately, IT budgets are not keeping up with the staff required for quality management and the response is to accelerate quality management by leveraging global teams. This article compares and contrasts agile GDD testing strategies for affecting quality management.

Geographically Distributed Development (GDD) is a common strategy in the software world today. Organizations are gaining experience in developing software globally and are discovering that the competitive demand for best-in-class, high quality applications requires greater agility in quality management. Unfortunately, IT budgets are not keeping up with the staff required for quality management and the response is to accelerate quality management by leveraging global teams. This article compares and contrasts agile GDD testing strategies for affecting quality management.

IBM has one-third of a million employees worldwide and we've succeeded at GDD for more than 15 years. Most importantly, we've had the opportunity to try out a range of development strategies in practice, strategies which many organizations are just considering today. Our strategies in agile quality management elaborated in this article will focus on the intimate link of requirements and change management. We will explore three strategies for geographically distributed quality management, the associated challenges with doing so, and how to address these challenges.

Quality Management

Quality management is the process of ensuring that the defined business requirements have been successfully translated into a working product. In traditional environments, quality teams often find that a significant amount of their work is not aligned with business requirements, but instead in testing unintended changes to the end product as a result of breakdowns in communication and human error. In this state, the role of the quality engineer is reduced to a "last line of defense tester." Quality teams operating in this lower state of existence are failing to realize the significant value they can bring to their software delivery organization.

{sidebar id=1}Quality engineers require intimacy with the business domain, requirements, and stakeholder motives just like developers. Traditionally, when business analysts write requirements, they may leave out minute, important details assuming they are "common knowledge" in the business domain. Relying only on a requirements specification proves to be risky in practice. One agile approach is to capture requirements as "customer tests," what traditionalists would refer to as function tests, whenever possible. By doing so you arguably eliminate the gaps that are introduced by statically capturing requirements and then recapturing the information as tests - in short, you cut out the documentation "middle man." However, not all requirements can be captured as tests and confirmatory testing (validation against requirements) is just part of the overall quality picture. More to come on this issue below.

The Challenges with GDD

Common approaches to agile software development have everyone involved in delivery collaborating together in one location. This is definitely preferable, but in practice this isn't always an option. A common strategy is for organizations to have independent quality teams which validate the work of development teams, even when the development teams are doing Test-Driven Development (TDD), and those independent testers aren't always co-located with the developers. When quality teams are geographically distributed from the developers, who in turn might even be separated from the stakeholders, it becomes very difficult for them to stay in synch with changes to requirements and application code. This results in a lot of cross-talk when the remote quality team isn't working with the most recent version of the system.

The result is risk to the integrity and quality of the end product. In order to reduce the risks of remote quality management and offshore testing, you must ensure that everyone has a common focus and stays in synch. Both development and quality management are driven ultimately by new and changing requirements and as a result it's critically important that these teams have visibility and are a collaborative part of the change management process.

It is fundamental to recognize that GDD potentially introduces three critical barriers to communication - distance, time, and culture - which your team must overcome to the best of its ability. Even if your team is spread amongst different buildings within the same city, you have introduced communication challenges preventing you from doing something as simple as walking down the hall to talk face-to-face with someone. In quality management, there is an even greater importance for face-to-face communication opportunities with development team peers, possibly relating to the old adage that it's better to deliver bad news face-to-face. When teams are in different time zones you also introduce a time lag between asking questions and getting answers, potentially further dividing the team into different "sides."

Different cultures can also increase barriers to communication, even something as simple as the word "yes" means significantly different things in different countries. For example, when someone replies yes to your question about whether security has been handled by the system, does that mean that it in fact has been handled, that they've considered the issue, or that they have simply heard your question but in fact have taken no action yet? All three situations are valid given the appropriate cultural context, and without a solid understanding of that context you are constantly at risk of making serious mistakes.

To overcome these challenges, you will need to invest in communication strategies which you normally wouldn't implement for co-located teams. The obvious ones are telecommunication strategies such as teleconferencing, videoconferencing, web-based conferencing, and online chat software. What isn't always obvious is that GDD teams need to bond just like non-GDD teams, implying the need to fly at least some, if not all, unfamiliar members of the team together to a single location at the beginning of the project. We all know that there is a qualitative difference in saying that you have worked with the people that you know because you've met them physically as opposed to people you've only met electronically. Our experience is that for any reasonably major GDD effort, it is worth the price to build these initial bonds. Throughout the project, you will also need to fly people between sites to keep the quality of communication as effective as possible. These "ambassadors" may spend a couple of weeks at a foreign site before flying home to their primary location.

In the short term this can be expensive, which is why many projects choose not to do this. However, a recent survey by Dr. Dobb's Journal found that offshoring projects only have a 43% success rate compared with 63% for traditional and 72% for Agile. [i] Considering the risk premium associated with offshoring, we advise that organizations fight against their instinctual "penny wise and pound foolish" strategies. Relationships established with this strategy are not soon lost. As you establish partnerships and relationships with GDD teams and providers, the cost of doing business with them decreases as your working relationship improves. Finally, collaborative technologies such as Wikis, workflow management systems, and email are even more important for GDD teams as compared to non-GDD teams. We like to think of tools such as this as a minimal requirement for any team, but they're clearly not sufficient to address all of your communication needs.

Towards an Agile Strategy

When organizations first adopt iterative techniques within a GDD environment, they typically adopt them for the development team but still leave quality management and testing for later phases of the lifecycle. As Barry Boehm pointed out years ago, the average cost of addressing a defect rises exponentially the longer it takes you to find it after it has been injected.[ii] The implication is that a focus on quality management and testing at the end of the lifecycle is the worst time that you could possibly choose to do so.

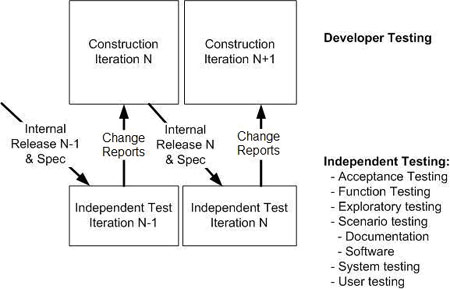

The first step is to realize that it's advantageous to focus on quality management and test throughout the lifecycle, so at the conclusion of each iteration the updated specification and the current version of the system is provided to the quality management team as depicted in Figure 1. With this strategy, developers ideally should be unit testing to the best of their ability and then handing off what they have to the quality team, which is invariably responsible for the majority of the testing. Unfortunately, some development teams choose not to test their code and thereby place all the burden of testing on the quality team. We recommend against this but recognize that it may be too radical for some organizations to start with.

This strategy requires your quality team to either have a test environment available all of the time, or be able to easily set up the environment when it's needed and then tear it down quickly to make it available for other teams. With this strategy you may leverage virtualization by taking a snapshot of your configured and integrated development environment at the end of each iteration and sending or making that virtual machine available to your geographically distributed quality management team. The quality team would then test the last iteration while the development team progresses on the current sprint/iteration. Or you may use deployment tools such as BuildForge or InstallAnywhere that automate the movement and promotion of environments in lockstep with iteration releases. As your organization leverages several quality and deployment teams through multi-sourcing, this need becomes more important in order to control change management overhead and remain agile.

This approach is definitely a step in the right direction because it pushes quality management forward in the lifecycle, reducing cost, risk, and potentially the overall schedule. However, this strategy is only one of several steps that should be considered. First, it requires a significant amount of bureaucracy to communicate and coordinate changing requirements and code to the quality management team. Although this strategy introduces quality management early in product development and maintenance, it takes a reactive approach which doesn't keep the dedicated quality team in lockstep with the rest of the group. It creates a temporal distribution between the quality management team and the development team because they are focused on different deltas of changes and requirements.

In GDD, the impact of this distribution is even more pronounced and dulls the focus of the global software delivery team as a whole. Second, the change reports must be described in detail to provide sufficient information to the developers so that they can reproduce the change within their own environments. Once again, your overall cost increases. Third, this approach can be culturally disconcerting for traditional testers who want the entire system in place with the finished specification against which to test. This strategy promotes a "mini-waterfall" mentality within the team instead of a truly iterative one, which motivates long iterations and thereby increases cost and risk.

Incremental Agile Testing

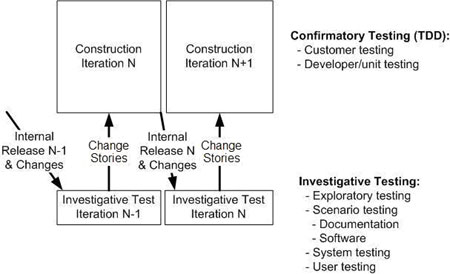

Most organizations will find that the easiest entry point into global agile quality management (GAQM) is to adopt a TDD approach within the development team. With TDD you specify a requirement via a single customer test using a tool such as FIT or FitNesse, or an aspect of the design via a developer test using a tool such as JUnit, and then write just enough production code to fulfill that test. Responsibility for achieving required quality levels is shared with the development and quality management teams. The majority of testing is done by the development team, testing that is the agile equivalent of confirmatory testing (validation against the specification) because the tests written via TDD reflect your current understanding of what your stakeholders want. This reduces the overall work load on the quality management team, freeing them to invest time in higher value investigative tests. Developers are in the best position to efficiently and effectively perform confirmatory tests on their own work, particularly when they are collaborating closely with their stakeholders.

With this strategy, depicted in Figure 2, the development team produces working software after each iteration taking a TDD-based approach. At the end of the iteration, it deploys the new version of the system into the test environment. A combination of virtualization and deployment solutions can be used to control the change and service management overhead. A greater degree of automation is introduced into this approach for cohesive global change management. For example, a standardized, auto-generated bill of materials report may be created with the iteration release summarizing the changes implemented in that iteration.

Once the quality team has access to the latest version, it begins performing investigative tests on it; these tests are often more complex and more valuable than confirmatory testing. Investigative testing explores issues such as system integration testing, exploratory testing, usability testing, security testing, and so on, that are typically not the development team's primary focus. As the quality team finds potential problems such as missing functionality, misalignment with business requirements, or outright defects, it reports them back to the development team in the form of "change stories." These change stories are treated just like other requirements - they are estimated, prioritized, and put on the work item stack. As the change stories are addressed, the tests which address them are added into the regression test suite run by the developers: once the change is identified via investigative testing, it becomes a known requirement and therefore is validated regressively via the confirmatory approach of TDD. This allows the quality team to continually focus on higher value quality management activities.

There are several reasons why an independent quality management team is beneficial. First, it increases the chance that you'll find unanticipated defects. Although TDD is a very effective technique, it can be fragile in practice because it relies on the ability of your stakeholders to exercise full application coverage rather than just the parts of the application that they are directly invested in. [iii] Second, the integrity of your quality management process is at a reduced risk of compromise when a professional, independent quality team has validated it. Third, investigative testing is an exploratory technique usually requiring more sophisticated tools and methods than those commonly used for TDD by developers. The cost/value ratio for investigative testing methods and tools becomes diluted when they are put to general use. For example, it's not cost effective for cross-functional development teams to conduct investigative testing because the tools and techniques are rarely applied in practice to a depth that delivers significant value. Fourth, because the interface between the teams is very simple and the change management complexity is abstracted and automated properly, it is easy to leverage different teams wherever you need to. This increases your global reach and flexibility.

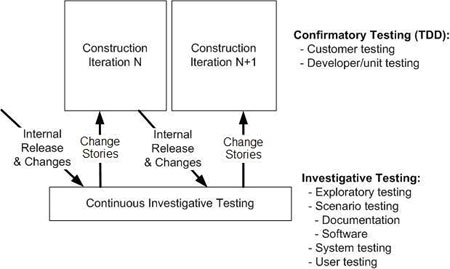

Continuous Agile Testing

In a fully agile approach the quality management team's activities are in continuous lockstep with the development team, as shown in Figure 3. The quality management team is not focused solely on testing the last iteration of work completed, but instead it performs investigative testing on the current iteration reducing the overall feedback cycle. Development teams participate in confirmatory testing of changes with their peers and collaborate with one another to formulate ideas for solutions to identified problems. In this approach, the quality management team has a reduced need to produce elaborate test plans because there is a smaller backlog of changes in each release. This continuous approach enables the quality management team to realize its full potential to accelerate the software delivery lifecycle, helping the organization realize a "follow-the-sun" approach with temporal distribution. Successful application of this approach requires a high degree of maturity in collaboration and understanding between GDD teams.

Once again, the quality management team identifies change stories and reports them back to the developers, often using a defect management tool such as FogBUGZ or ClearQuest. Interestingly, many agile GDD teams use these sorts of tools for all their requirements needs, a perfect example being the Eclipse development team which uses Bugzilla for this. Next generation IDEs such as Jazz have these sorts of requirement/defect management features self-contained, enabling close collaboration even in GDD situations. Continuous integration is leveraged in this approach and further integrated with automated deployment tools to manage change and service overhead between the GDD teams. This approach is made further resilient when security roles, workflow handoffs, server provisioning, and self service are managed and implemented by the change and release system.

Agile is Relative

There is no single solution for quality management in a GDD situation and your organization will need to choose the approach that works best for you. This is why we described three different strategies in this article. Although the continuous approach is "more agile" than the incremental approach, which in turn is more agile than the first strategy, the fact is that the first strategy may prove to be a sufficiently radical improvement over what you're doing today. Many people would like to have a single answer; the fact is that agile is relative and a quality management strategy which works well in one situation may not work well in others.

Global agile quality management is truly enabled when remote quality teams understand the complete story of changes and can provide feedback to the developers on a continuous basis throughout the project. This reduces the risk of miscommunication, incomplete communication, or often no communication of changes to distributed quality teams. However, this doesn't imply that you need a complex change control process, a change control board following an onerous process, or detailed documentation describing every potential change. The agile approach is to institute "just enough" change and test management in order to get the job done. A fully integrated agile quality management team has a continuous and collaborative feedback loop with the business analysts and customers, as well as the development team. An effective global change management solution should drive this and also provision and manage quality efforts so they don't overwhelm or derail project goals.

Lately, the Agile community has started applying its techniques at scale and geographic distribution is one of several critical scaling factors which are being explored (team size, regulatory compliance, governance, and complexity are other factors). We've still got a lot to learn about GDD, but luckily there are some very good resources out there that can be leveraged along the way. IBM has succeeded at GDD for years and we're very happy to share our hard-earned experiences with you.[iv]

About the Authors

Khurram Nizami is responsible for worldwide enablement of the IBM Rational team products. Khurram's expertise is in helping customers and IBM with applying practical solutions to challenges the software delivery community faces today. Khurram's specialty and area of interest is Geographically Distributed Development (GDD). Prior to IBM Rational, he was a manager for Cap Gemini Ernst amp; Young in the Systems Development and Integration practice where he was a project manager and technical lead for a number of global software delivery projects. Khurram holds a Master of Science in Management of Technology from the University of Minnesota and is a PMI certified Project Management Professional (PMP).

[i] Ambler, S.W. (2007). IT Projects Success Rates Survey: August 2007. www.ambysoft.com/surveys/success2007.html

[ii] Boehm, B. (1981). Software Engineering Economics. Englewood Cliffs, NJ: Prentice Hall.

[iii] Siniaalto, M. and Abrahamsson, P. (2007). Does Test-Driven Development Improve the Program Code? Alarming Results from a Comparative Case Study. Proceedings of CEE-SET 2007 Conference.

[iv] Parvathanathan, K., Chakrabarti, A., Patil, P.P., Sen, S., Sharma, N., and Johng, Y.B. (2007). Global Development and Delivery in Practice: Experiences of the IBM Rational India Lab, www.redbooks.ibm.com/abstracts/sg247424.html. This book, which explores GDD from the vantage point of IBM India's offshore delivery center, can be downloaded free of charge.